Click an image to preview above

#Sign Language Recognition

#Sign Language Translation

#Machine Learning

#Computer Vision

#YOLOv8

#MediaPipe

#Flask

#Python

#Hand Gesture Recognition

#Text-to-Speech

#Real-Time Detection

#Human Computer Interaction

#Deaf Communication Support

#Gesture Tracking

#Deep Learning

The Sign Language Recognition and Translation System Using Machine Learning and Computer Vision is developed to help reduce the communication barrier between Deaf or hard-of-hearing individuals and people who do not understand sign language. The main goal of the system is to support two-way communication in a practical and user-friendly way. It translates text and speech into sign language and also converts sign language into readable text and audible speech. This makes the system suitable for real-world use in places such as schools, hospitals, offices, and public environments where communication support is often needed.

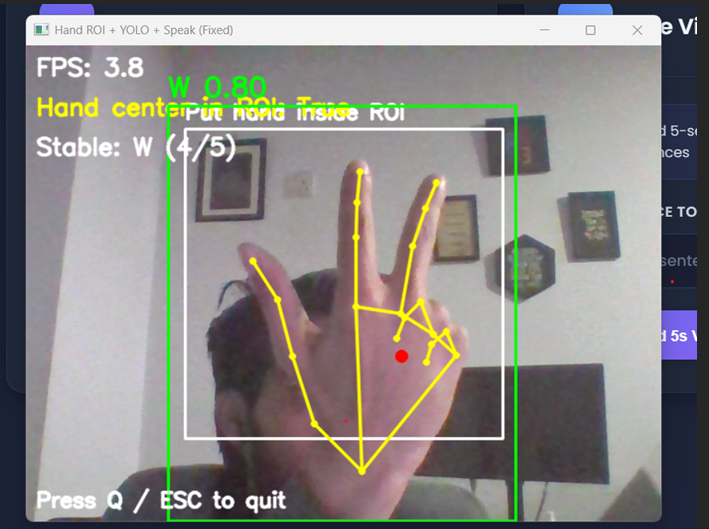

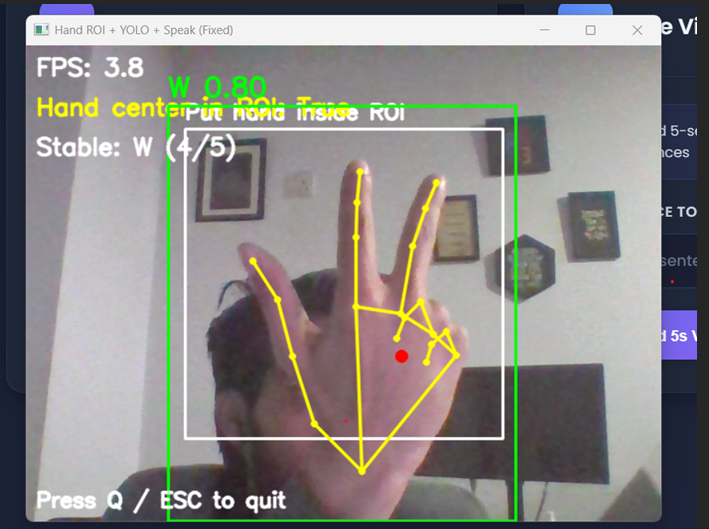

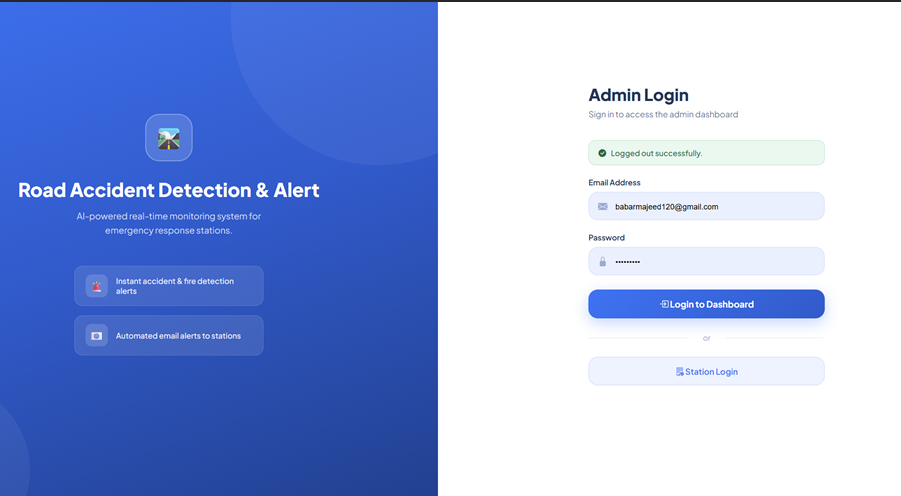

The system is built using a Python backend with Flask for application logic and integration. The frontend is designed using HTML, CSS, and JavaScript to provide a clean and interactive web interface. For sign alphabet recognition, a dataset is collected from Rob flow, uploaded to Google Colab, and trained using the YOLOv8 model. This trained model is used to detect and classify hand signs from image and video input with good speed and efficiency for real-time use.

For gesture-based and sentence-level recognition, the system uses MediaPipe to track hand landmarks, finger positions, and movement angles in real time. Since sign language is not only based on static hand shapes but also on motion and timing, MediaPipe plays an important role in understanding dynamic gestures. The system captures important hand angles and movement patterns and stores them in JSON format. These stored patterns are later matched with live or recorded gestures to recognize predefined signs and sentence-level expressions more accurately.

The project also includes text-to-speech functionality, which converts recognized text into spoken audio output. This makes communication more natural and useful for hearing users. On the other side, when a user enters text or speech, the system connects that input with stored sign language content and produces sign-based output. In this way, the project works as a complete two-way communication solution instead of only a one-side recognition tool.

The system interface includes useful features such as live sign detection, pose capture, video recording, playback, and system controls. These modules make the platform easy to use for both technical and non-technical users. Overall, this project combines machine learning, computer vision, gesture tracking, and web technologies into one complete platform that supports real-time communication, accessibility improvement, and sign language learning support.